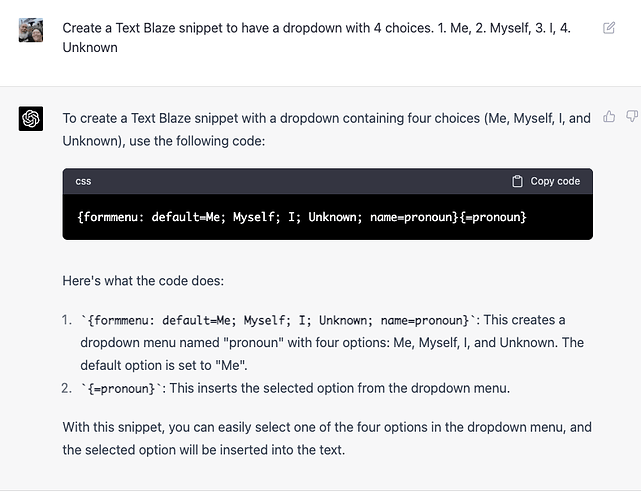

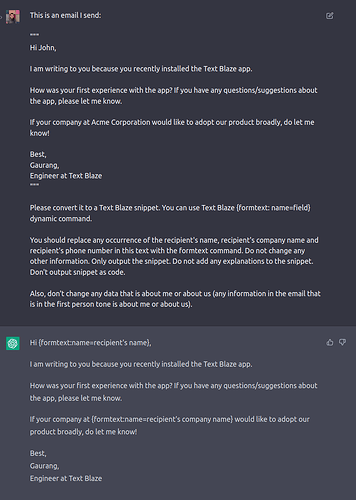

I thought about it and decided for fun to see if I could train ChatGPT to write snippets for me. It's still much easier for me to do it myself, I'm sure, but here is the results.

Thanks Laren, this is great. I've been playing around with GPT4 as well for trying out some complex ideas and to help me write some regex stuff (I still consider regex to be black art/magic ![]() )

)

That's a very interesting idea! Allow me to dive deeper into this topic:

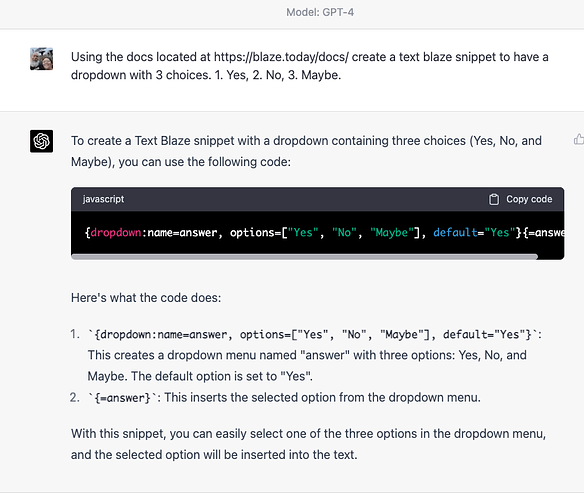

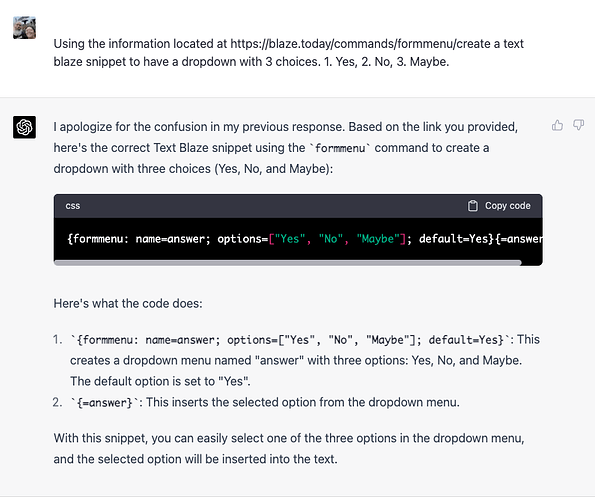

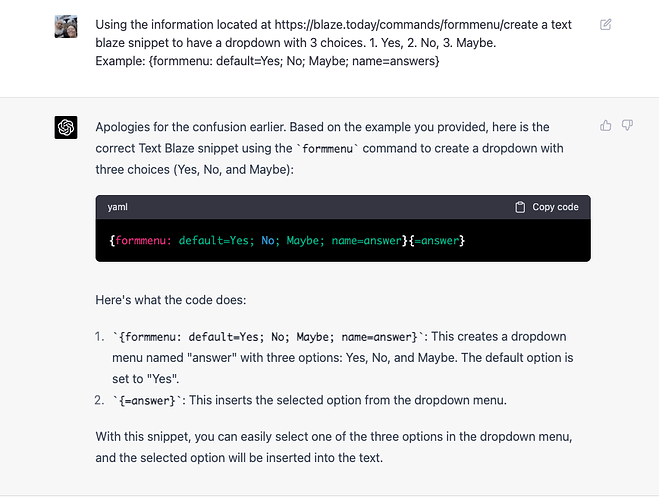

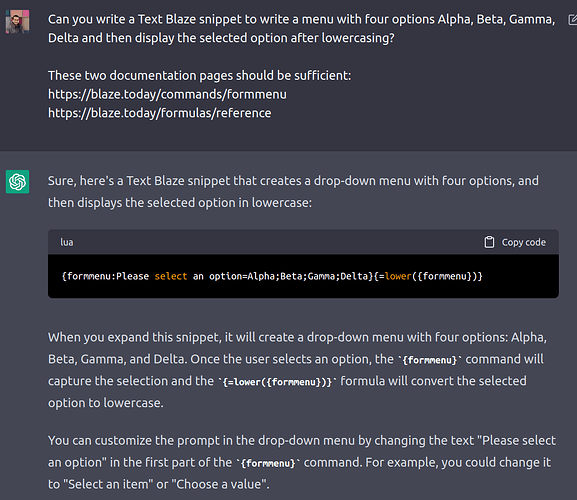

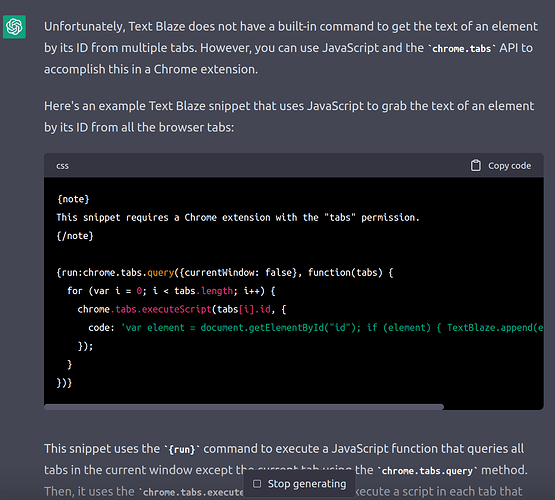

The tricky part is getting it to generate valid stuff. It gets the basic stuff right (most of the time), but if you ask even slightly difficult questions, it will go very wrong:

I am using gpt-3.5-turbo but you can also try it with GPT-4 and (I expect to) see similar results.

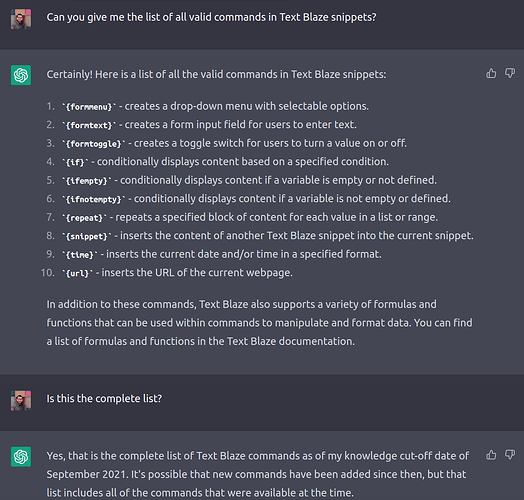

These models will also invent new syntaxes (technically called "hallucination") to answer your question even if those syntaxes don't exist yet:

I suspect this will also be an issue with GPT-4.

This is not surprising considering that it does not understand the Text Blaze formula language, but it does understand other more popular languages like Python and JavaScript.

The above answer is incorrect. Commands 5, 6, 10 don't exist and the list is missing several commands that did exist back in 2021 (like {cursor})

Another thing to note that since the model's context length is only 4096 tokens, it is not able to read the full documentation at once anyway.

Even taking everything above into account, I do expect that we will be able to generate valid Text Blaze snippets very soon as this tech is getting better and getting cheaper. Text Blaze snippets are different from imperative programming languages but at the end of day they're still text, and if GPT-4 can work with images and texts, I sure hope it (or a future model) can work with Text Blaze snippets.

On the other hand, there are other ways to get it to write a Text Blaze snippet that totally work and are probably already very useful ![]()

I noticed after "training" the A.I. it could create the snippet without the example. So, in theory, maybe once it's been trained it might be able to do it again.

One more thing.........You can create snippets to use in the ChatGPT field.

It seems ChatGPT (and probably GPT4.0) cannot read links even when the model says it can ![]() So when we gave it the links of the documentation and asked it to read the docs, it still outputted snippets based on its own previous knowledge of Text Blaze.

So when we gave it the links of the documentation and asked it to read the docs, it still outputted snippets based on its own previous knowledge of Text Blaze.

That makes sense, and I had a feeling. So, what about copy/paste webpage information in for training purposes? Have you had success with that yet? Also, I had a pleasant surprise when I logged into Notion.io and found that they now use A.I. there. I'm not sure how close an api could be, but maybe someday...... ![]()

I have already tried a lot, and it is very difficult to "train" ChatGPT by copy pasting documentation, because the token limit is only 4096 tokens. It's too short for teaching a new programming language🙂

It may work with GPT-4, I am yet to try that out.

This approach will not scale. There's too much content to expect GPT to work well from learner shots. Even at 32k, it will probably hit some ceilings, and the cost would be prohibitive anyway.

You need an AI architecture that uses embeddings based on the complete corpus of the language. With that in place, you can use similarity matching [cheaply] to identify the learner shot payloads needed to generate specific code based on the documentation.

To be precise, learner shots do not teach; they temporarily supplement the LLMs knowledge base, which forgets it milliseconds later. ![]()

Alternatively, you must train your own LLM or fine-tune an existing one.

Sorry for necroposting, but FYI, now we have a built-in AI integration that does what @Santa_Laren was trying to do. We have tuned the AI to output useful and dynamic Text Blaze templates.

If any of you tries it out, and has any feedback, please do let us know! ![]()